The Education Gadfly Weekly: Time for a ceasefire in the civics wars

Time for a ceasefire in the civics wars

The conflict over civics education is unnecessary, driven more by cultural combatants and politicians than by vast divides among parents and citizens regarding what schools should teach and children should learn. If those who inflame these debates would hold their fire, we could build on a latent accord among the clients of civics education.

Time for a ceasefire in the civics wars

The history of ed reform shows that progress is possible

Teacher evaluation reform was very successful—on paper

Examining the evolution of the college wage premium—and its biggest beneficiary

American learning losses in the pandemic era: A global perspective

#917: The end of Chevron deference, with Joshua Dunn

Cheers and Jeers: April 25, 2024

What we're reading this week: April 25, 2024

The history of ed reform shows that progress is possible

Teacher evaluation reform was very successful—on paper

Examining the evolution of the college wage premium—and its biggest beneficiary

American learning losses in the pandemic era: A global perspective

#917: The end of Chevron deference, with Joshua Dunn

Cheers and Jeers: April 25, 2024

What we're reading this week: April 25, 2024

Time for a ceasefire in the civics wars

How about a ceasefire in the civics wars? Possibly even a peace treaty? This could turn out to be easier to achieve than pausing the conflict in Gaza (or Kashmir or Sudan or…). The world’s big fights generally arise from opposed interests and disputes over fundamentals. Looking from afar at American civics education, one might think the same: hopeless divisions over what should happen in civics classrooms, textbooks, and assessments. Should they focus on “how government works” or “what can I do to change things?” Is this subject about knowledge or action, information or attitudes, facts or dispositions? Rights or obligations?

Yet unlike disputes that pit country against country and terrorist against nation state, much of the civics conflict is unnecessary, driven more by cultural combatants and politicians than by vast divides among parents and citizens regarding what schools should teach and children should learn. If those who inflame these debates would hold their fire, we could build on a latent accord among the clients of civics education.

The evidence has been rolling in for years.

The University of Southern California’s Dornsife Center, for example, surveyed 1500 K–12 parents in 2021 and reported that:

…parents across political parties feel it is important or very important for students to learn about how the U.S. system of government works (85 percent), requirements for voting (79 percent), the U.S.’s leadership role in the world (73 percent), the federal government’s influence over state and local affairs (72 percent), how students can get involved in local government or politics (71 percent), benefits and challenges of social programs like Medicare and Social Security (64 percent), and contributions of historical figures who are women (74 percent) and racial/ethnic minorities (71 percent).

A year later, the Jack Miller Center surveyed parents of elementary-secondary pupils and found that “89 percent agree that a civic education about our nation’s founding principles is ‘very important.’” This semi-consensus also extends to history class: “Over 92 percent of parents believe that the achievements of key historical figures should be taught even if their views do not align with modern values—cutting against the narrative that America is firmly divided on how to teach students about the founders and the country’s history.”

As is clear from Dornsife’s percentages, we’re not looking at total consensus, just widespread agreement on fundamentals. Get into hot topics like gender, abortion, and racism and plenty of Americans want their kids’ schools to convey a one-sided view or avoid the issue altogether. Yet nearly everyone wants students to learn how to analyze issues, to understand why people argue about them, and how a democratic republic attempts to navigate them. Nearly everyone wants kids to understand those mechanisms—why we have the kind of government we do, where it came from, how it works, and the principles that drive it. And everyone, I’m pretty sure, wants their children to grow up to be good citizens.

The hard part—even after professional warriors drop their weapons—is turning the latent consensus into concrete standards, curricula, and pedagogy. As Frederick Hess and Matthew Rice noted in 2020, after leading a series of bipartisan discussions at the American Enterprise Institute, there is “widespread agreement on many...of the goals of civics education” but “little agreement on how to get there.”

Toward that end, several recent initiatives have revisited what should actually be taught. Probably the two best known are the Educating for American Democracy “roadmap” (EAD), launched—with a grant from the National Endowment for the Humanities—by iCivics with a broad-based group of academics and K–12 practitioners, and “American Birthright,” a set of “model K–12 social studies standards” produced by the “Civics Alliance,” which was convened by the National Association of Scholars.

EAD claims to offer “a vision for the integration of history and civic education throughout grades K–12.” This forty-page document abounds with questions that students should grapple with, not things they should know. (“What can we learn from historical leaders even when we disagree with their actions and values?” “What fundamental sources and texts in American constitutionalism and history do you invoke to help you understand current events? What gives those sources credibility and authority?”) It’s squarely on the “inquiry” side of the curriculum, not a list of people, events, and structures, which is why it’s thought by many to represent the “progressive” side of the civics debate. Yet the questions it poses can’t be answered very well unless one also knows stuff, so it does furnish a framework on which to hang a thorough and ambitious curriculum, provided someone adds the “content” that teachers and their pupils will need.

Content is what “American Birthright” is all about. Its 115 pages also offer a framework—up to a point. They abound in names, events, and dates, which is why these model standards are widely viewed as coming from the “traditional” side. The document also poses explanatory and discussion challenges but tends to frame them as simplified admonitions about big, complicated topics: “Explain why free people form governments to defend their liberty”; “Describe how citizens demonstrate civility, cooperation, self-reliance, volunteerism, and other civic virtues.” Those are obviously important things to do, but really hard unless one has already acquired EAD-style analytic skills, as well as factual knowledge.

In my view, an amalgam of the best of EAD and American Birthright would make for an awesome social studies plan, albeit one that would occupy far more school time than is typically allotted to these subjects today. Such a blend would also take advantage of the latent consensus about what kids should learn.

That doesn’t mean civics classes in Dallas would be identical to civics in Seattle. There’s no reason to expect matched curricula or teaching styles across a vast nation with a decentralized K–12 system governed almost entirely by states and communities. Yet we need some shared understanding of what it means to be an American and what’s changed—and hasn’t—over these several centuries.

One truly basic version is embodied in the test that immigrants must pass to become American citizens. Administered by the Citizenship and Immigration Services (USCIS), it consists of 100 knowledge-centered questions about history and civics. (“What stops one branch of government from becoming too powerful?” “Why do some states have more Representatives than other states?” “Before he was President, Eisenhower was a general. What war was he in?”) Those taking it face only ten questions—but since nobody knows which ten they’ll get, preparing for the test means learning the answers to all hundred.

Knowing those things is just a start on real citizenship, but it’s not a bad threshold to ask people to cross. That’s why at least eight states now require high school students to pass some version of the test. And a team at Arizona State University has amplified it into an actual curriculum by adding original sources, study guides, teacher materials, and other supplements meant to “exceed the USCIS test in helping students learn not just the facts tested but [also] the underlying concepts, ideas, and events.”

This, too, is a potential path toward curricular consensus. Yet instead of striving for a unified approach to revitalizing civics and history across the land, we see potshots and diatribes. The Civics Alliance, for instance, slams the College Board’s excellent Advanced Placement course in civics and American government because it includes an “action” component—never mind that the course, which was developed jointly with the National Constitution Center, is anchored to Supreme Court decisions and is impressively balanced. And the lead author of American Birthright gave an “F+” to EAD, declaring that its “light amount of traditional civics content” is “surrounded by the far heavier emphasis on hollow educational ‘skills,’ video games civics, and a very large amount of radical action civics.” He went farther, denouncing all forms of “bipartisan cooperation” in this realm because “the radicals conceive of ‘civics’ as a mean to eliminate their political opponents from the public square and because civics reformers are only accepted in such ‘bipartisan’ endeavors as very junior associates rather than equal partners.”

That’s what culture warriors do, but they don’t all come from the right. EAD has also been pilloried from the left, both by progressive academics who deplore its decision to leave actual curriculum (and test) development to state and local sources rather than propagating a national plan, and by equity hawks who find it soft on “empowerment” and “social justice” issues.

Teachers, too, sometimes add to the discord and suspicion because many who teach these subjects don’t view them the same way their clients do. As Hess and Michael McShane note in their new book, Getting Education Right, drawing on a RAND survey of social studies teachers, “Barely half deemed it essential that students understand concepts like the separation of powers or checks and balances.” A broader RAND survey of K–12 instructors found “that more...think civics education is about promoting environmental activism than ‘knowledge of social, political, and civic institutions.’”

Is the potential juice worth so many squeezes? Why keep struggling to fend off the warriors and redirect the instructors? We know that earlier efforts at civics revival have petered out or been reversed, and that the quest for concord is slower and a lot less fun than hurling brickbats.

So why persist? The country has muddled through despite the fact that Americans know next to nothing about civics or history—what editorial cartoonist Pat Oliphant once called a “forest fire of ignorance.” Never mind that just 22 percent of eighth graders were “proficient” in civics on the recent National Assessment and barely 25 percent of college-age Americans know that the vice-president breaks ties in the Senate. (More think that’s the responsibility of the Speaker of the House!) How much does it really matter in the real world that they understand so little about government?

Yet muddling through yesterday is no guarantee of successful muddling tomorrow. Our citizenship woes grow more consequential as people’s faith in democracy itself falters. YouGov reported late last year that almost a third of young Americans agree—many of them strongly—with the statement “Democracy is no longer a viable system, and America should explore alternative forms of government.”

Why believe in something that you barely understand or were never taught and feel no role in?

Civic ignorance is a silent killer, akin to high blood pressure, easy to ignore or take for granted even as it accompanies and hastens the onset of more serious maladies. Deteriorating norms of behavior, vulnerability to fake news and conspiracy theories, inability to compromise, isolation from civil society—all are associated with not knowing or caring much about the functions of government, the principles that underlie it, or the historical saga that explains why we have the kind we do, where it has succeeded, where it has faltered, how it has changed.

Over time, like persistent hypertension, accumulated ignorance makes a difference. As we huddle in separate ideological (and socioeconomic and ethnographic) silos and accustom ourselves to cruder language and worse conduct, especially in the public square—“defining deviancy down,” as famously phrased by my own mentor, the late Senator Pat Moynihan—our civic and citizenship challenges mount. It’s no surprise that people, especially the young, grow more cynical and pessimistic, more open to alternatives such as strong leaders who don’t have to bother with messy elections of the ”free and fair” variety.

Because peoples’ attitudes and actions in the civics-and-citizenship realm are shaped by a hundred forces, schools and colleges bear limited responsibility. But when it comes to old-fashioned ignorance, formal education has a big role—and was playing it poorly before anyone heard of “culture wars.” For decades, civics has loomed small in the curriculum, standards have been low, requirements few (and declining), instructors often ill-prepared—and in few places are schools, teachers, or students held to account for whether anything gets learned. Rare is the college that requires its students to study civics, and almost as rare are colleges that even offer such courses.

Yes, most high schoolers must take a course in civics or government—though a dozen states have no such graduation requirement, and most of those that do mandate just a single semester. Some administer a statewide “end of course” exam, but almost nowhere do students actually have to pass it. In Maryland, where I live, the test score counts for 20 percent of a student’s course grade, while teachers determine 80 percent. (Until recently, passing the exam itself was prerequisite for a diploma, but that was seen as too onerous and punitive, particularly for poor and minority students.)

When the Thomas B. Fordham Institute evaluated state academic standards for civics (and U.S. history) in 2021, reviewers gave A ratings to just five jurisdictions, while judging twenty-one to deserve a D or F. Common failings, said the reviewers, included “overbroad, vague, or otherwise insufficient guidance for curriculum and instruction” and neglect of “topics that are essential to informed citizenship and historical comprehension.”

Weak standards, low expectations, few requirements, practically no accountability, ill-prepared (and oft-misguided) teachers, and too little time in the curriculum. A mess, to be sure, yet it’s hard to muddle through with a population that’s gradually untethering from democracy, that knows not how a Senate tie gets broken, and that’s more engaged with video games than understanding elections or attending to issues before the town council. Nor should we look forward to a day when schools, to the extent that they teach the subject at all, are confined either to progressive “action civics” or MAGA-style “patriotism civics.” It’s one thing for the country to evolve politically toward blue and red but quite another for young Americans not to know enough to see what they have in common.

Whether we center a curricular renaissance on the citizenship test, on an arranged marriage between EAD and American Birthright, or on something entirely different, it’s important to persist. And instead of getting depressed by the challenges ahead, let’s recall once more that, in this realm, there’s far greater agreement than argument across the land on fundamentals. It’s a ceasefire that most Americans would cheer.

Editor’s note: A version of this essay was first published in two parts by The 74.

The history of ed reform shows that progress is possible

When my daughters were preteens, they came home from school one day alarmed. During a lesson on climate change, the teacher or some part of the lesson, it was never quite clear, had basically stated that, absent radical attention to warming, there would be little hope for survivability on earth after 2030. This was during peak Greta Thunberg–mania.

I remember having a few thoughts. First, I’m deeply concerned about climate change, but what was reported to me by the kids isn’t what the evidence shows and is a bananas lesson. But also, even assuming this is what the evidence convincingly shows, these are really young kids. What would be the point of telling them this?

This episode recurred to me recently in the context of the constant, and weirdly fashionable, bellyaching by reformers about how education reform has accomplished little, if anything at all. Oddly, this is one place reformers and anti-reformers now seem to agree, even as the evidence indicates it’s not true. What’s more, even assuming it was in fact the case, if you’re an advocate, why the hell would you run around braying it?

Has everything gone swimmingly? Of course not. But in the context of U.S. social policy, until a few years ago, we were making what would be considered good progress. (It’s worth noting how long social policy change takes in a country like this. Perhaps the recent quite rapid progress on a few issues like gay and transgender rights has skewed perceptions.)

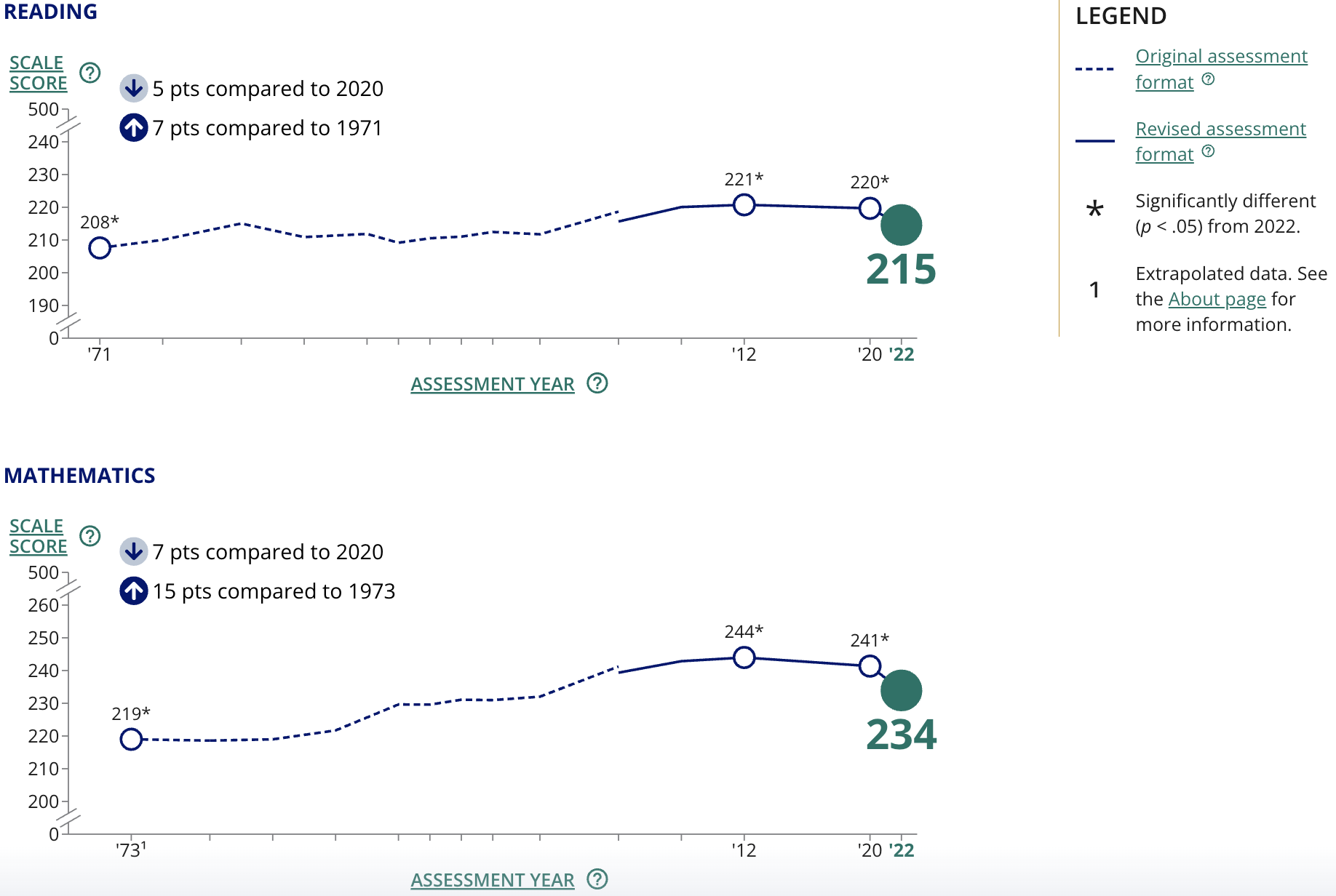

Until the Obama Administration’s and Congress’s decision to throw in the towel on accountability, student achievement was on a steady, if modest, growth path. (See figure 1.) Students furthest from opportunity were making gains. Not enough, but gains. The pandemic, of course, scrambled everything, as did obliviousness by both the Trump and Biden teams, but we should not lose sight of those earlier trajectories—that are averages across an entire student population. People will argue about counterfactuals and the pace of change, but “barely budged,” which you often hear, this is not.

Figure 1. Trend in NAEP long-term reading and math average scores for nine-year-olds

Source: “Explore NAEP Long-Term Trends in Reading and Mathematics,” U.S. Department of Education (accessed April 2024).

Tom Kane, while being perhaps the most passionate voice on the urgency of pandemic learning loss, also suggests that we may have the longer-term story of achievement wrong. A deeper dive he and several colleagues conducted found that “income-based achievement gaps in fourth and eighth grade narrowed between 1992 and 2015—while math scores rose at all income levels.” Similarly, Rick Hanushek and several colleagues found “a steady, albeit modest, reduction in the SES-achievement relationship over the past four decades.” Other data indicate the same thing about low-achievers—notable progress during the standards-era toward what we used to call equity.

Time to throw a party? Nope. The data are all as sobering as they are encouraging. But it does mean the Dante-like, abandon-all-hope approach to talking about educational progress is not only politically stupid whether you’re a reformer, staunch public school supporter, or both—it’s also empirically questionable.

There are plenty of other examples of authentic progress, usually beyond what was promised or expected.

I remember being lectured by the “smart set” in education that KIPP would never have more than fifteen schools and was consequently more or less a distraction I was naïve to even be interested in. (The same people said Teach For America would be perhaps a boutique program of 500 teachers a year if they were really lucky.) By extension, most people thought Bill Clinton’s goal of 3,000 charter schools was just throwaway political hyperbole. Today, there are more than 7,000 charter schools (and about 275 of them are KIPP schools). Are all charters fantastic? No. But many are, and the data show, on average, that they’re getting better and continue to be a good bet as a policy.

More people are going to college, too, and more importantly, graduating. Again, this is not an unvarnished blessing, given the mixed quality and return on investment of higher education, but is also, on average, for the good—especially for low-income students in quality higher education programs.

Standards are also improving. There is plenty to fault in President Obama’s education record, but it seems inarguable that, in aggregate, state standards were better in 2016 than in 2008, and he and Arne Duncan played a role in nudging that along. Standards are also more specific and actionable for teachers and more often aligned with curriculum. We’re also seeing a boom in high-quality curricula hitting the market, and the focus on curriculum is a welcome and noteworthy change. In addition to being good for students, curricular support makes the job of teaching more sustainable.

That’s in part because of another good-news story: We’re finally getting serious about early reading combined with knowledge-rich curricula. The politically fashionable but largely ineffective approaches are being sidelined, and an evidence-based approach to literacy is taking hold. Is this arguably a half-century overdue? Yes. But you can bemoan that or appreciate that late is better than never. It’s still progress.

On all of these issues, we saw a combination government activity, philanthropy, and social entrepreneurs of different sorts. The private sector also played an important role in some key aspects, including innovation. And that activity, for a time, engaged journalists and policy leaders. It was exciting.

If we’re being honest, the biggest barrier to a lot of changes has been and continues to be the constantly changing fads and politics in education. On reading, curriculum, or assessment, too many people figure out what is politically fashionable and proceed from there. I’ve had people tell me that phonics is just a Republican way of teaching reading. That’s idiotic. That sort of ethos is why the gap between evidence and practice is often so substantial and preference falsification is rampant.

This is also the same problem we see now with everyone having the mopes about education improvement and reform. Like those tan shoes with white soles it seems like every dude was suddenly wearing, it’s just the fashionable thing.

It’s remarkable how often you hear at an education meeting that little or nothing has improved in schools, or even that things are basically the same as they were during Jim Crow. It’s complete nonsense, of course. Most people are smart, well-read enough, and aware of the larger world to know this (at least I sure hope that’s the case). Yet it’s the fashion right now to be in that mode. You get socially and professionally rewarded for it even as it’s creating a culture at odds with high expectations and optimism for young people.

We’ve also created a culture where saying there has been progress or things aren’t so bad is likened to being blind to the problems. Progress and continuing challenges are not mutually exclusive. This country and its K–12 sector have made enormous progress—and there is still a lot of work to do.

But, to do that work, you obviously need people to believe it’s possible. That’s why saying there is no progress is insane as an advocacy strategy. Joe Biden is not going to lead a parade, but he’ll get in front of one. So will most pols. But who wants to lead or be anywhere near a parade of Eeyores? Right now, the message to politicians, philanthropy, and media is a dour if not repellent one.

Sure, if you genuinely believe education is not a lever for change or empowerment, then you should go work in a different sector or on different issues. Reasonable people can disagree about the best way to effect change. Otherwise, let’s stop admiring the problems and get back at it with a lot more energy, new ideas, and some fresh arguments and debates.

Here’s the bottom line: Until the one-two punch of ESSA and the pandemic, achievement trends were going in the right direction—especially for students on the wrong end of the achievement gap. And the supports for students, whether new school options or curricula, are improving. There is pent up demand and increasingly supply around innovation.

This is not a bad time to work in education. It should be an exciting time. Reform has hardly been flawless and hasn’t achieved its loftier goals, but at the same time, evidence of progress is all around. This sort of fits-and-starts progress is how social policy change generally happens. In fact, given how stubbornly resistant to change the education system is, how political, and how fad driven, the narrative that nothing has worked is pretty much backwards. We should say so. And then act accordingly.

Editor’s note: A version of this essay was first published on the author’s blog, Eduwonk.

Teacher evaluation reform was very successful—on paper

Editor’s note: This is the second part in a series on teacher evaluation reform. Part one recalled how teacher evaluation became a thing. This was first published on the author’s Substack, The Education Daly.

Today, I’ll summarize the heyday of this movement, which lasted roughly six years from 2009 to 2015. It hit the rocks as quickly as it began—and not necessarily because it didn’t “work,” so to speak. Here’s how it happened.

What were we thinking?

There was broad support across the political spectrum for improving teacher evaluation. But it’s most deeply associated with that cast of characters called ed reformers, of whom I’m one. Our movement, sometimes portrayed as consisting of alumni from Teach For America’s first fifteen years, but better understood to include a multi-generational amalgam of frustrated civil rights organizations, maverick district administrators, and assorted malcontents, shared a set of beliefs that animated their work. Others may put it more eloquently, but my description of our thinking in the early 2000s would go something like:

- America’s achievement gaps are shameful—and fixable. We were exceptionally optimistic about the potential of every child to succeed academically. Poverty could not be accepted as determinative. Low performance stemmed from educational neglect and low expectations.

- Our optimism about children was combined with extreme cynicism about the systems that schooled them. These were the villains. They watched kids fail and then blamed them for it. They defended practices like “last in, first out” layoffs that may have been preferable to adults but were demonstrable harmful to students.

- There was scant margin for error. As blue collar jobs disappeared, kids would be punted into a thankless economy and forced to scrape for a comfortable profession. Failure at school would mean failure in life.

- Instead of fixing individual schools—or even districts—we needed to focus on whole systems. Think bigger. Stop tinkering around the edges. Don’t just raise student performance, eliminate the gaps entirely—to zero.

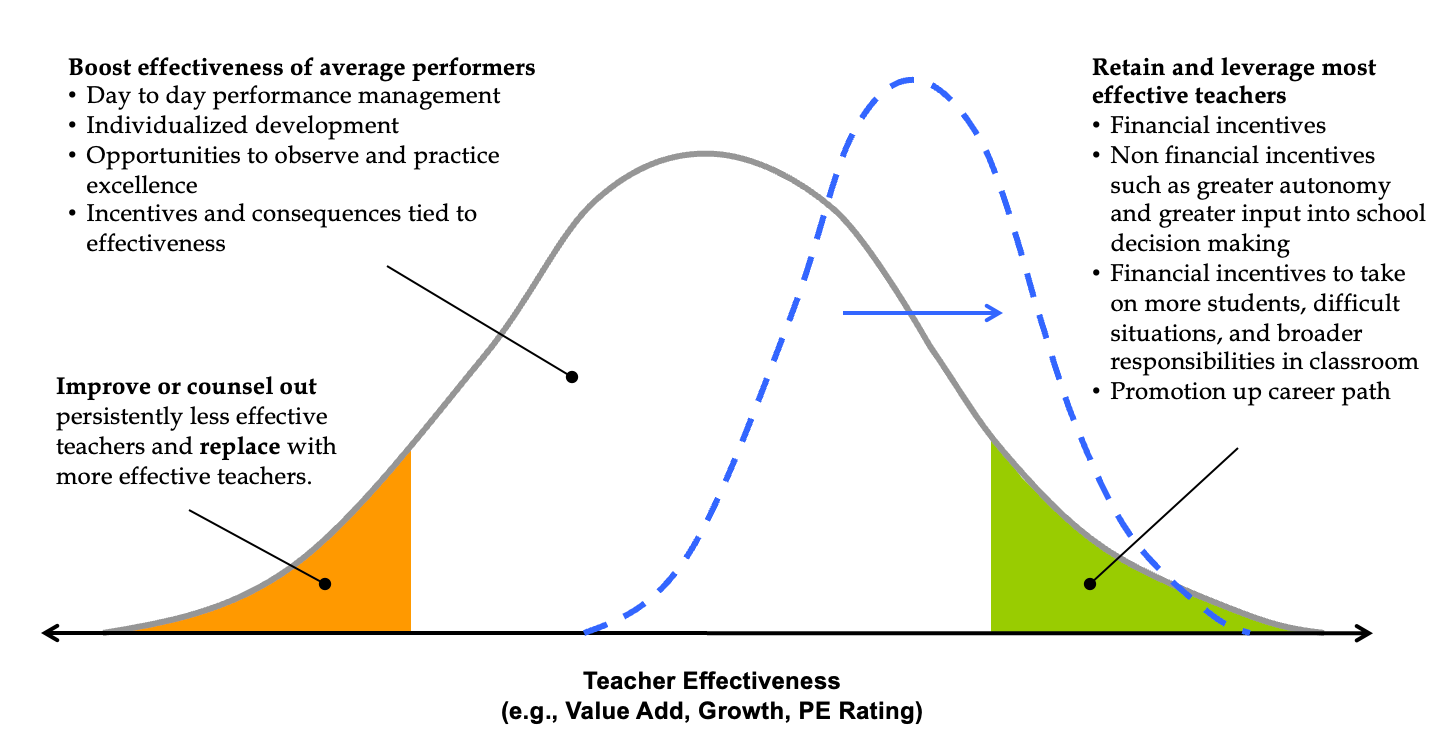

- The data were clear. Acting on the differences in effectiveness between teachers—by improving or dismissing the lowest performers and retaining the highest performers—offered the greatest potential to raise student achievement of any available lever. From heart, we quoted excerpts from research papers like “Having a top-quartile teacher rather than a bottom-quartile teacher four years in a row could be enough to close the Black-White test score gap.”

- Systems wouldn’t take this lying down. They were made to resist, to wait out. Any short-term change would be washed away overnight like a sandcastle.

I’m oversimplifying only slightly in saying we felt we had been presented with a math function. There were some low-performing teachers. Past patterns suggested they were unlikely to improve. If they exited the profession and were replaced by typical new hires—and if, at the same time, more of the highest-performing teachers were retained for more years—achievement would rise significantly. The question was whether we had the courage to do it.

What did we advise districts and schools to do?

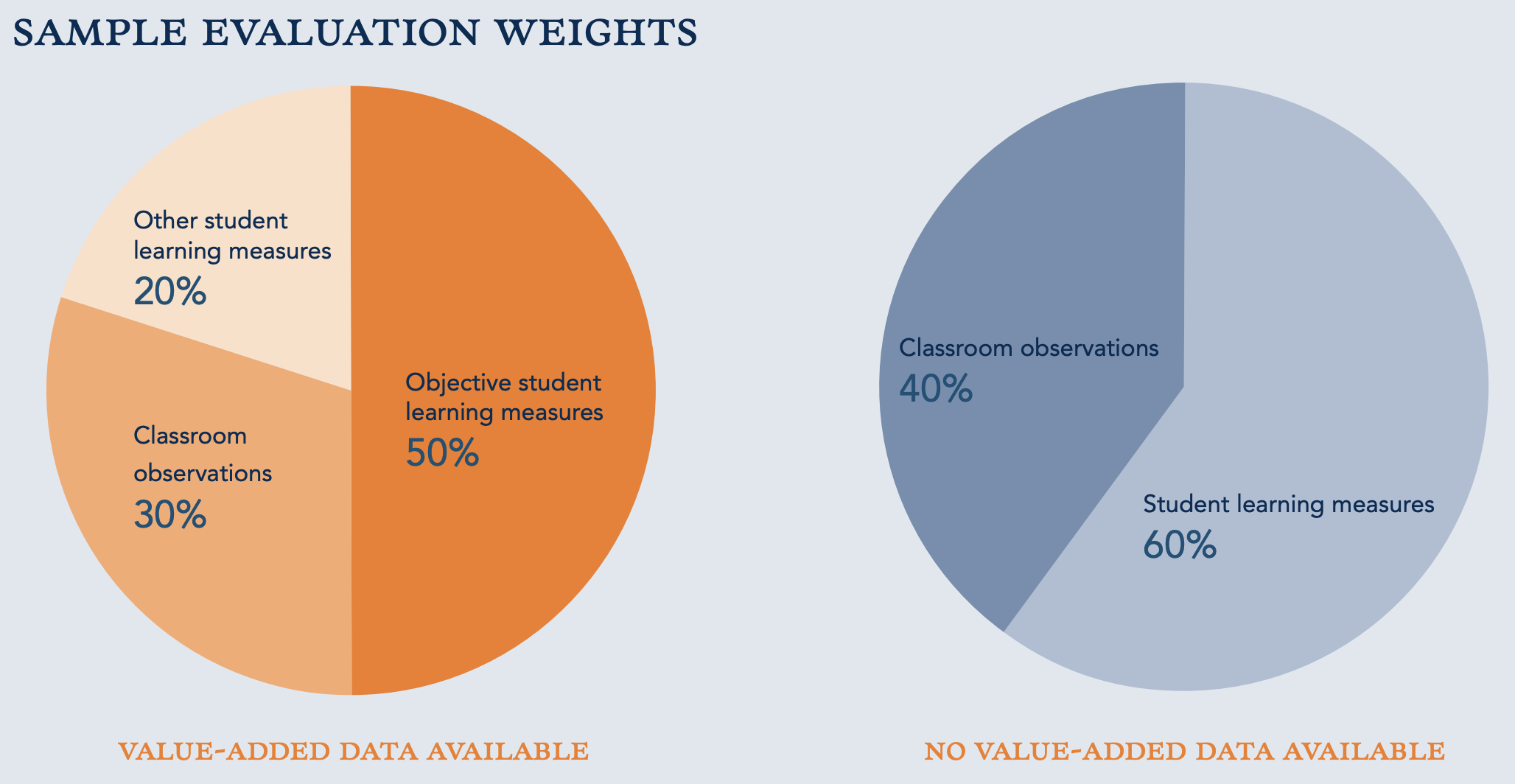

New evaluation systems needed to yield accurate results and hold up when challenged in due process. Unions—which had expressed openness to modernizing evaluations—made clear that the evaluations must be objective. That was their term. New approaches couldn’t rely on the perfunctory classroom observations of principals, who were seen by the unions as keepers of personal vendettas. Standards had to be the same from one classroom and school to the next.

Reformers accepted this challenge with an excess of enthusiasm. In the process, I suspect we also took the bait. To meet the unions’ specs while achieving the goal of differentiating good teaching from bad, we got technocratic.

You can see this in a document we published at TNTP about a year after The Widget Effect. Teacher Evaluation 2.0 was a set of specific recommendations for redesigning evals. Summarized briefly:

- Annual evaluations. If we’re serious about improving instruction, a biannual (or even less frequent) process doesn’t deliver sufficient feedback.

- Clear, rigorous expectations. The bar should be excellence rather than minimally acceptable compliance, e.g., this lesson has a written objective, as some observation protocols required.

- Multiple measures. This was a biggie. The idea of using more than one data source was not controversial, but we suggested placing more weight on objective measures of student achievement—like growth in test scores—than observations. It was a radical departure from the way teachers had been evaluated. We’ll get into this in greater detail before this series is done, I promise.

- Multiple rating categories. Some districts used a simple binary of satisfactory or unsatisfactory. A teacher was A-OK or fired. We recommended four or five levels to create usable differentiation.

- Regular feedback. Evaluations should not depend on a single point in time where the principal breezes through with a clipboard. They should be frequent enough to be fair and drive improvement.

- Evaluations should inform decisions. Not just dismissal for low performers, but also pay advancement for top performers, opportunities to become a lead teacher or coach, and protection for top teachers against being laid off during hard economic times (in the wake of the real estate crash, layoffs were a very real threat).

With these design recommendations handy and snowballing momentum around evaluation, the stage was set for states to overhaul their systems in their quests for Race to the Top funds.

Away we went

Whoa boy, did things take off.

By 2016, forty-four states passed teacher evaluation legislation. The number of states requiring consideration of evaluation ratings when conferring tenure rose from zero—and that’s not a joke, pre-2009, you got tenure as long as you weren’t fired by a certain deadline—to twenty-three.[i]

In 2009, only four states required student outcomes to be the preponderant component of a teacher’s evaluation. In 2015, the number had grown to sixteen. The number of states requiring outcomes to count for some part of the final rating increased from fifteen to forty-three.

The success of evaluation reform on paper—in the law—was remarkable. How about on the ground? Much more complex. And less successful.

A group of early movers received constant press attention. The highest profile belonged to D.C. Public Schools, which rolled out their system, called IMPACT, for the 2009–10 school year. The state of Tennessee, one of the first winners of Race to the Top, piloted its system in 2010–11 and fully deployed it in 2011–12. Also, beginning in 2009–10, the Gates Foundation underwrote evaluation overhauls in three districts—Hillsborough, Florida; Memphis, Tennessee; and Pittsburgh, Pennsylvania—as well as a collection of California charter networks.[ii]

These systems deserve a deep dive of their own, but we don’t have space to do it here. For our purposes, you mainly need to know that D.C.’s and Tennessee’s efforts were effective by most definitions. Independent research verified that student achievement improved, teachers responded to the new evaluations by getting better, and high performers were retained while low performers left at higher rates.[iii]

The Gates districts did not succeed—particularly because enthusiasm for implementing the new systems waned and teachers still generally received very high ratings.

In just about all cases, though, the political blowback against early adopters was fierce.

Along with that heat, two factors slowed implementation of new evaluations more broadly.

The first was the transition to the Common Core State Standards, which most states had committed to adopt. New standards meant new assessments with more rigorous definitions of a “proficient” student. It was not possible to calculate a student’s annual growth using their performance on one type of test in year A and a totally different test in year B. Many states paused consideration of student learning in teacher evaluations while the tests were in transition.[iv]

The second was rising tension around teacher dismissals. This subject received tons of public discussion in the early-mover districts and states. Were teachers really going to be fired? In significant numbers?

In some cases, the answer was yes. Central Falls, Rhode Island, fired all the teachers at its underperforming high school in February 2010. Somewhat ironically, this decision was not predicated on evaluations of individual teachers. It was a blanket move to clear the way for a school turnaround. Teachers were not given any due process. When President Obama embraced this tough love approach, he opened a rift with teachers unions and cemented the notion that improving schools meant firing teachers.

In 2010, the first results from D.C.’s IMPACT system came in. The Washington Post’s headline was “Rhee dismisses 241 teachers in the district.”

As the public perception of evaluation reform became more associated with firing low performers and less with recognizing and rewarding great teachers, political support declined. Teachers unions dropped their neutral/open stance and actively opposed new teacher evaluations. It happened relatively quickly.

In 2014, AFT President Randi Weingarten retreated on the use of student performance and publicly embarrassed the Gates Foundation by refusing to accept its money. Just a few months later, the National Education Association called on Obama’s Secretary of Education, Arne Duncan, to resign for his support of changes to teacher evaluation and tenure.

While facing these defections from the left side of the political spectrum, the evaluation coalition had the same problem on the right. There were multiple drivers, but a major one was the Obama Administration’s offer to states of waivers from NCLB sanctions in exchange for adoption of its favored policies—including evaluation. Conservatives felt that the program amounted to micromanagement of local districts. Before long, Republicans were against just about everything Obama was trying to do in education—even some stuff they’d favored a few years prior.

The wave that propelled teacher evaluation to the top of the education agenda dissipated. By the time most states completed their pauses and began to officially implement their new laws, there wasn’t much heft behind them. In New York, Governor Andrew Cuomo, who had been a hawk on teacher evaluation, reversed his position in 2015 and called for reducing the emphasis on student tests after families began opting their children out of them. Most states similarly backed off.

In an eye-opening 2017 paper titled Revisiting the Widget Effect, Matt Kraft and Allison Gilmour reviewed twenty-four states that had adopted teacher evaluation reforms and reported that less than 1 percent of teachers were being rated unsatisfactory in nearly all of them. After so much drama about teachers getting fired, few were.

A 2023 paper reported that, nationally, evaluation reforms had no effect on student achievement.

It didn’t take long for teacher evaluation to go from the hot new thing to declared a failure.

Why? What were the missteps? What have we learned from them? Those questions will require a post of their own. Next time, we’ll wrap up this series by going there.

[i] NCTQ did a great job tracking all of the policy changes in real time. Their 2015 yearbook is available here. Chad Aldeman also unpacked it for a 2017 retrospective in Education Next.

[ii] There are many more early movers, some of whom took big risks to implement their systems and got notably good results. I don’t mean to shortchange them here by omission. Cincinnati, New Mexico, Newark, and Dallas are just a few.

[iii] In D.C., researchers found that IMPACT achieved “substantial differentiation in ratings,” pressure on low performing teachers to improve. When teachers departed, “DCPS has been able to replace low-performing teachers in high-poverty schools with teachers who are substantially more effective.” D.C.’s performance on national tests improved dramatically. In Tennessee, a major study concluded that “evaluation reform contributed to student achievement gains and that evaluation reform operated to improve student achievement through two mechanisms: teacher development and strategic teacher retention.” Tennessee’s ranking on national tests also improved quite a bit. Lynn Olson did a nice write-up for FutureEd here. You can read about the disappointing results for Gates-funded sites here.

[iv] More than a few advocates felt that pushing for new standards and new teacher evaluations concurrently was too much—that the standards should have come first. Then, after teachers got used to them, go for the evaluations. If you wonder how we responded, I invite you to re-read the description of our thinking at the top of this post.

Examining the evolution of the college wage premium—and its biggest beneficiary

As the downsides of a “college for all” perspective become clear, it’s critical that we continue to track how the “college wage premium” (CWP) is evolving, for whom, and at what levels of degree attainment. The CWP is typically understood to mean the difference between average wages earned by workers with a four-year degree versus those earned by workers with a high school diploma. (That said, there’s also been illuminating research on earning differences between holders of bachelor’s and associate degrees.) This latest report from the Federal Reserve Bank of San Francisco gives us another look at CWP (bachelor’s only) over the years from 2000 to 2022.

The descriptive study uses microdata from the Current Population Survey, a longitudinal project of the Census Bureau, which collects a plethora of data each month from roughly 60,000 households. Analysts focus on the quarter of that sample that gets asked every month about wages and work hours, and they combine those responses over the twelve months of each year to yield annual samples comparable across racial and ethnic groups. They adjust the results for differences in the demographic and geographic composition of the sample across groups and over time and identify the racial groups in a way where there is no overlap.

Results show that, in 2000, wages for workers with at least a college degree were 68 percent higher on average than wages earned by high school graduates. This was part of a substantial rise in CWP during the three decades starting in the 1980s. However, that rise began to flatten during the decade following the Great Recession of 2007–09. CWP peaked at 79 percent in the mid-2010s but declined to about 75 percent in 2022. While that 4 percentage point drop may sound small, especially given three years of Covid-fueled economic turmoil at the end, it’s substantial in light of the 10 percentage point gain in the CWP between 2000 and 2015.

In looking at racial and ethnic groups, analysts find that CWP is especially large for Asian workers, with college grads earning more than twice what high school graduates earn, a 120 percent premium. The other three racial and ethnic groups averaged between 70 and 80 percent CWP. In the early half of the recovery from the Great Recession, all groups saw gains, but those gains flattened for White workers towards the latter half of the recovery and fell for Hispanic and Black workers. But the premium for Asians continued to rise during that period, which likely reflects differences in their choice of majors, attainment of post-graduate degrees, and subsequent occupations. (Since the onset of the pandemic in 2020, CWP edged down slightly for all groups except Hispanic workers.)

Why did CWP plateau during the Great Recession recovery and subsequently decline? The analyses show that more rapid wage growth for high school graduates, rather than slower wage growth for college graduates, is the primary explanation for the flattening or reduction of the premium. Specifically, from 2000 to 2011, real wages picked up for all groups, but they accelerated more for those graduating from high school and for Black and Hispanic workers in particular.

Since 2011, wages for White high school grads and college grads rose roughly the same amount, while wages for Asian college grads rose more rapidly than for Asian high school grads. The one substantive change seen during the first two years since pandemic onset is that real wages for Asian high school grads has now picked up again, too. The researchers conclude that tight labor markets in recent years have changed relative wages and may be altering the perceived benefits of a college education, leading fewer students to pursue college after high school.

It is good news that students with just a high school diploma are earning more today than they have historically. Increased minimum wages, tax and transfer policies, and Covid-era supports also likely contributed to this laudable boost for lower-income Americans. However, given the higher lifetime earnings of college grads—CWP doubles for them over the course of their lives—those students who are motivated, able, and have a good chance of finishing college should go! Those not prepared or less than motivated by the idea of four years spent in college should know their other options—and high schools should do a better job equipping them for those possibilities.

SOURCE: Leila Bengali, Marcus Sander, Robert G. Valletta, and Cindy Zhao, “Falling college wage premiums by race and ethnicity,” The Federal Research Bank of San Francisco (August 2023).

American learning losses in the pandemic era: A global perspective

A new report from the Hoover Institution’s Education Success Initiative provides another close look at the U.S. education system’s global standing in the aftermath of Covid, focusing specifically on the economic impacts the changes portend.

Analysts Eric Hanushek and Bradley Strauss compare Program for International Student Assessment (PISA) and the National Assessment of Educational Progress (NAEP) data from before and after pandemic-related school closures and the temporary shift to remote learning. They show where American students as a whole landed in the achievement distribution, how international standings changed pre- and post-Covid, and conduct an interesting state-by-state analysis.

Hanushek and Strauss start with the most-recent administration of PISA, which tested the math, reading, and science skills of fifteen-year-old students in eighty-one countries and territories in 2022. To reduce noise in the data, comparisons are pared down to math results only. The United States ranked thirty-fourth among all participants, slightly below the mean score of the combined Organization for Economic Co-operation and Development (OECD) countries and comparable to Malta and the Slovak Republic. We are three-quarters of a standard deviation behind Singapore, the top performer internationally, and half of a standard deviation behind Macao and Taiwan—numbers two and three. While Singapore, Taiwan, Saudi Arabia, and ten others actually showed a gain in PISA math results between 2018 (the last administration prior to Covid) and 2022, the United States was among the large cadre of countries that registered score drops: approximately 15 points. While not as steep as a number of others—Norway shed almost 35 points, Iceland nearly 40—Hanushek and Strauss calculate the economic impact of the drop on American students this way: The average student will have 5−6 percent lower lifetime earnings compared to expected earnings had there been no pandemic, unless something is done to fully remediate this learning loss. Absent such a fix, they calculate, the resulting lower-quality future labor force will cost the U.S. economy up to $31 trillion (in 2020 dollars)—equivalent to a 3 percent lower GDP throughout the remainder of the century.

2022 math results for NAEP, “The Nation’s Report Card,” are “transformed” by the analysts onto the same scale as PISA math results, creating a putative scale where individual states are ranked in comparison to PISA countries. The best-performing state on NAEP in 2022 was Massachusetts. It would be sixteenth in the world if it were ranked on its own, placing student performance just ahead of the average student in Austria and just behind the average student in the United Kingdom. The next-highest-performing state was Utah, ranking twenty-first between Slovenia and Finland. At the other end of the spectrum, thirteen states—including Oklahoma, West Virginia, Washington, D.C., and New Mexico—fell behind the average student performance in 77th-place Turkey.

Despite being the top state, Massachusetts was among those states registering more than a 10-point drop in NAEP math scores. Oklahoma, Pennsylvania, Delaware, and Minnesota also registered scores more than 10 points lower in 2022 than in 2018. Fordham’s home state of Ohio was in the middle, dropping between 5 and 7 points overall and finishing just above Norway and the OECD average in the putative international rankings.

“The full explanation of the causes of the differential losses is not available,” Hanushek and Strauss write, “but there is evidence that hybrid and remote instruction related to closures contributed to the distribution of losses.” This becomes more obvious when we consider the widely varying Covid responses from different U.S. states. They then calculate the heterogeneity of impacts on average lifetime earnings—using their previous PISA-based methodology—in each state, finding a 4 percent loss for students whose states were at the top of the score distribution (like Massachusetts and Utah) and a whopping 9 percent loss for those at the bottom (such as Delaware and Oklahoma).

The silver lining around all this bad news is that these losses are not completely irremediable. Improved curricula, high-quality teaching, extended school days, and high-dosage tutoring have all shown promise in helping students catch up and accelerate back to where data indicate they should be. Hanushek and Strauss cite all these and more in their report. Unfortunately, here in 2024 (and even in 2022 when these state and international data were collected), time has already run out for older students, and some level of learning and economic losses have already been cemented into their futures. How many more students will leave high school before we can say we’ve done everything we can to help?

SOURCE: Eric A. Hanushek and Bradley Strauss, “A Global Perspective on U.S. Learning Losses,” Hoover Education Success Initiative (February 2024).

#917: The end of Chevron deference, with Joshua Dunn

On this week’s Education Gadfly Show podcast, Joshua Dunn, Executive Director of the Institute of American Civics at the University of Tennessee, joins Mike and David to discuss how public schools will be affected by the end of the Chevron deference—the judicial doctrine in which courts defer to federal agencies’ reasonable interpretations of ambiguous statutes. Then, on the Research Minute, Amber examines a new paper criticizing the famous STAR class size study.

Recommended content:

- “Fishing for rules” —Joshua Dunn, Education Next

- “The case for the supreme court to overturn Chevron Deference” —Wall Street Journal

- “The Chevron deference is desperately needed” —David Martin, Washington Post

- Karun Adusumilli, Francesco Agostinelli, and Emilio Borghesan, “Heterogeneity and endogenous compliance: Implications for scaling class size interventions,” National Bureau of Economic Research (April 2024).

Feedback Welcome: Have ideas for improving our podcast? Send them to Daniel Buck at [email protected].

The following transcript was created using AI software.

Michael Petrilli:

Welcome to the Education Gadfly Show. I'm your host, Mike Petrilli of the Thomas B. Fordham Institute. Today, Joshua Dunn, executive director of the Institute of American Civics at the University of Tennessee joins us to discuss what the end of the Chevron doctrine could mean for schools. Then on the research minute, Amber reports on a new paper criticizing early research on class size reduction. All this on the Education Gadfly Show.

Hello. This is your host, Mike Petrilli of the Thomas B. Fordham Institute here at the Education Gadfly Show and online at fordhaminstitute.org. And now please welcome our special guest for this week, Josh Dunn. Josh, welcome to the show.

Joshua Dunn (00:55):

Great to be with you Mike.

Michael Petrilli (00:56):

Josh is Executive Director of the Institute of American Civics at the Howard h Baker School for Public Policy and Public Affairs at the University of Tennessee. My goodness, we've got to use some abbreviations or something to be able to get that all in.

Joshua Dunn (01:11):

Yeah, it's some acronyms would be helpful.

Michael Petrilli (01:13):

Right? It's exciting this, maybe we'll talk a little bit about this, Josh, I know this is a new role for you, but first let's bring in David Griffith, my co-host.

David Griffith (01:22):

Hey Mike, awlways a pleasure.

Michael Petrilli (01:24):

Yeah, great to see. So Josh, I know you best because we work together at Education Next. Josh writes the Legal Beat column and has for many years where I'm an executive editor. And yeah, you just moved from the University of Colorado, Colorado Springs to the University of Tennessee. My understanding is that this is one of now perhaps a growing number of programs focused on civics at higher education institutions.

Joshua Dunn (01:51):

That's correct. There's several of them around the country now. You have Arizona, Texas, North Carolina, Florida, Mississippi has one. Ohio is, I think five now are starting there. So yeah, there's a growing list of these civics institutes where people are concerned about lack of civic knowledge and decaying civil discourse. And I think that's the mandate for all of us to try to make improvements on both those.

Michael Petrilli (02:16):

Well, that's exciting. Very good. And I heard perhaps a rumor that our friend, Lamar Alexander May have had a role in encouraging you to come to Tennessee.

Joshua Dunn (02:24):

Yes, yes. He was involved and yeah, he did reach out and I see him fairly regularly now here. Yeah, he's a visitor to the Baker School fairly often.

Michael Petrilli (02:36):

Oh, that's great. Alright, we'll give him our best. If you see him, we are here to talk about your latest column, which was on the Chevron deference. The Supreme Court Heard had an oral hearing on this a little while ago. They're going to come out with a decision in June. Sounds wonky. Could have a huge impact though actually on the US Department of Education and all the other federal agencies. So let's talk about it on Ed reform update. Okay, Josh, so the actual case is about fishing. The old doctrine is about Chevron as in the oil company. What the heck does this have to do with education? Give us the brief tutorial. What is the Chevron deference?

Joshua Dunn (03:20):

So Chevron deference, emergent case from 1984 where the Supreme Court said that courts should defer to the judgments of administrative agencies when they were interpreting ambiguous federal statutes. And the idea was that well, generalist judges, they don't have the expertise to go and second guess the decisions of these expert administrators who have all sorts of background knowledge about the particular issues that they have to work out in applying and interpreting these federal statutes. And so there's been some criticism of Chevron deference for, well, really since the beginning, but it's been growing in recent years. And so now there are a couple of cases that came before the court where there's a good chance of the court overturned this Chevron deference. They think that actually undermines the rule of law leads to uncertainty with the law. And yeah, this really affects really any federal agency. It's obviously not just the ones before the court right now, in this case, I think you could make an argument that the Department of Education might be the most affected if the court overturns Chevron.

Michael Petrilli (04:33):

Yeah, I mean, conservatives have argued for a long time that this is a way of basically empowering the administrative state and that these regulatory agencies then end up making law, and that's supposed to be the job of Congress right now. Of course, Congress has not exactly been showing itself to be a highly effective institution recently. And so the notion that Congress is going to have the capacity to get into the weeds on some of these issues, it would need to, in a complicated policy issue, the environment or education, anything else, or be able to make quick changes if they've made a decision that's not working out. Whereas an administrative agency, at least maybe through rulemaking and the like, could be quicker to make adjustments and have the expertise to go deeper. And then there's a role of judges, like you said. So is this about giving power back to Congress saying, no, I'm sorry, department of Education or any other department, you can't make the laws? Or is it about giving power back to the judges who get to decide what the law means?

Joshua Dunn (05:39):

Exactly. So that's one of the reasons why at least conservatives initially supported Chevron was because they thought it built in political accountability. Because the idea was that presidents could then come in and influence agency rulemaking in these areas. And so you actually would have some control of the process. But I think over time, what we've seen is that often, as you mentioned, the agencies or the concern has been the agencies then just kind of go off on their own and implement their own rules. And so you might not even get a whole lot of political oversight from the executive branch precisely because these agencies are often insulated from direct control by presidents. I mean, presidents can exercise some, but you have career officials in these agencies and they can kind of outlast them and work through them. And so there's some dissatisfaction because of that. So then the question is, yes, what's going to happen here? Is this going to transfer authority to the courts or will courts maybe when you do have disputes over whether or not a statute is ambiguous, do they just kick it back to Congress and say, look, you aren't even entitled to regulate in this area. Congress has to, Congress has to be clear if it wants you to do something in this area,

Michael Petrilli (06:53):

Let's take an example that is fresh from the headlines, right? We're just like Law and Order here and it's Title ix, the Department of Education just put out rulemaking. So proposed regulations around Title ix. We've seen this go back and forth under different administrations, right? And one of the big questions is when Title IX says, I think the words are something like you shall not discriminate on the basis of sex, or if you do, you can lose federal funding. Does that mean that by discriminating on the basis of sex, does that protect kids that are L-G-B-T-Q or is that a different set of issues and is that going too far? Is the Department of Education stretching the law when it's interpreting it that way or not?

Joshua Dunn (07:40):

Yeah. So obviously the Department of Education now in the Office for Civil Rights, they're arguing that the Supreme Court's decision in Bostock versus Clayton County gives them some authority to do this. That involved Title seven. But then there's still this issue about whether or not that applies to Title ix. Does the meaning of sex have the same meaning in those two? The court didn't officially decide that in Bostock versus Clayton County. So that's what the Department of Education is saying. Previously though, that didn't get in the way of them back in the Obama administration, they kind jumped headlong into this and said that it does even before Bostock versus Clayton County. And they just issued a dear colleague letter this time around with a Title IX regulations. They at least went through a kind of an abbreviated rulemaking process where they opened up notice and comment.

(08:29):

Procedures were not as long as you would normally have, but I think it was a month or so and they still got what, around 250,000 public comments on the issue. And I actually think that that was an indication that the Department of Education recognized that some of their past behavior might be coming under suspicion by the courts where they just issue these dear colleague letters and run with it as if they'd gone through a regular rulemaking process. But so they did kind of go through this rulemaking process this time, and now as you mentioned, they've just now issued new regulations that should go into effect on August 1st.

Michael Petrilli (09:06):

Right. Alright. But this is, again, if the court comes out and said, okay, we're changing the Chevron deference, we are now going to go back to a time when if it's ambiguous, the judges are going to have to decide it or they're going to have to punt it back to Congress. This could be the kind of thing on Title IX or maybe on some of the discipline policies that we've seen where there's a sense that the regulatory agencies are stretching the meaning of the law to fit their policy preferences, that you may have courts snapping back more aggressively than they did in the past.

Joshua Dunn (09:41):

I think there's no doubt about that. And I think that really, given the current composition of the Supreme Court, it's unlikely to see the Supreme Court say, okay, judges go in and fill in the blanks. Now, since it's not appropriate for agencies to do this, you can now substitute your own judgment about what's best. I don't think we're going to see that. I think it is going to be much more likely for the Supreme Court to say, yes, this is ambiguous, and you have to go to Congress for authorization to engage in rulemaking in this area. So I think that's what's going to happen. Then the complaint will be, well, Congress is kind of busy or they can't get their act together. And I think for the Supreme Court though, given the people who are on it now, they're going to say, well, that's Congress's problem. Yeah, it's not our job to make up for their deficiencies as an institution. So

Michael Petrilli (10:31):

Again, to the Title IX point, you'd say, well, so if we believe that the law should protect L-G-B-T-Q kids, for example in schools, that is the job of Congress to pass a law that makes it clear that we're going to now change the language in Title ix.

Joshua Dunn (10:46):

I think so. Although again, it depends on whether or not they're willing to apply this reasoning from that Bostock decision to Title ix. I think that's unlikely though, because you do have this issue of athletics that's looming out there. Even though the Biden administration, they decided not to address athletics, and particularly with transgender students because that was going to be political dynamite going into November. And so with its latest round of proposed regulations, they punted on it. But I think the Supreme Court is smart enough to know that if they actually did apply the reasoning of Bostock to Title ix, that immediately the Department of Education would go in and start issuing those kinds of regulations and saying that schools actually are mandated to allow students to participate on the team that matches their gender identity.

Michael Petrilli (11:38):

So David, how are you feeling about this? We're going to empower re-empower the first branch, right?

David Griffith (11:44):

Yeah, I'm not wild about that. Basically, given the status of the first branch right now, maybe if we could wind back, turn the clock back a few decades, I'd feel differently. But I've never even experienced a functional legislature. I mostly have questions, to be honest. I'm struggling to wrap my heads around the implications. And it all starts with this word ambiguous in my lifetime. I've seen almost every clause of the Constitution debated. It strikes me that they're all kind of ambiguous. Your average law maybe is a little more clearly written, but I assume that they don't come with this label that says, I am ambiguous on them. So doesn't it all just kind of boil down to judge's discretion when the

Joshua Dunn (12:27):

Well some of that. But there can be, you can look at statutes and see where there's a clear authorization to engage in rulemaking in a particular area, and then perhaps others where it's not. And you've seen the court do that with what they've called this major questions doctrine. They say, well, look, this statute, this involves such an enormous area. If Congress actually intended for you to have this authority, they would've been explicit about it. And I'm sympathetic to the idea that, yeah, Congress hasn't been terribly functional, but perhaps part of the reason they haven't been functional is that they've been able to pass off their responsibilities to agencies in courts. And so maybe this will be the thing that forces them to actually do their job.

Michael Petrilli (13:13):

And look, we saw this week with Ukraine and the Israel, sometimes when their back is up against the wall, they do act perhaps much later than they should. But that is one way to look at it. Hey, that is all the time we've got on this discussion. I'm sure we could go much longer. And Josh, it's always a pleasure. I find this legal stuff fascinating, and I appreciate you making it clear for us.

Joshua Dunn (13:39):

Oh, thanks. I hope it clarified some. We can be certain though. There'll be more litigation to help clarify it for us.

Michael Petrilli (13:48):

Alright, well check out Josh's column on this at Education Next. We will link to it in the show notes. Again, Josh Dunn, executive director at the Institute for American Civics at the University of Tennessee. Thanks so much for joining us, Josh.

Joshua Dunn (14:01):

Thanks, Mike.

Michael Petrilli (14:03):

Alright, now it's time for everyone's favorite Amber's Research Minute. Amber, welcome back to the show.

Amber Northern (14:15):

Thank you, Mike

Michael Petrilli (14:16):

Just had a great conversation there about legal stuff. And I have to say, in an alternative universe, a different timeline for my life, I think I would've gone to law school.

Amber Northern (14:26):

Really? Wow. I've never heard you say that.

Michael Petrilli (14:28):

I really find this stuff fascinating, especially the constitutional law.

David Griffith (14:35):

You're an armchair economist, Oh, excuse me, lawyer, arm chair, lawyer. You're also an arm unsure economist though, Mike.

Michael Petrilli (14:41):

Yes. I do also wish I'd been an economist. I know. Or maybe both. The problem with the law school thing is I don't think you are allowed to go to law school and say, okay, I want to go to law school if someday I get to either be on or argue in front of the Supreme Court.

Amber Northern (14:56):

Wow, that's a high goal.

Michael Petrilli (14:59):

I guess that's not how it works. Right?

Amber Northern (15:02):

Don't sell yourself short, Mike.

Michael Petrilli (15:03):

Yeah. Alright. And maybe it's not too late.

Amber Northern (15:05):

We have these lawyers, by the way, that come and adjudicate these teacher cases on the state board. And one we affectionately call Perry Mason because he acts like he is in front of the Supreme Court, but it's just us. But anyway, he has those same ambitions, Mike, I do believe.

Michael Petrilli (15:24):

Well, what you got for us this week?

Amber Northern (15:25):

Oh man, I'm going to have to get technical on you, at least try not to. But man, there was this NB ER study that came out recently. Again, super technical, but it calls into question probably one of the most well-known studies in education ever. Which, what's that?

Michael Petrilli (15:44):

The STAR class size study

Amber Northern (15:46):

The STAR Study on star class size.

David Griffith (15:49):

Oh no.

Amber Northern (15:50):

It had serious methodological concerns. Again, I had read some of this but not seen it so well articulated. So just a reminder, if you're under a Rock Tennessee star, use an experimental design to assess the impact of class size reduction interventions. This current study breaks down the problems with that original research. It's conducted by three economists at UPEN and Princeton, and they provided in this NBER paper a better way to analyze the results from STAR reliably to gain new and useful information about class size interventions. So hey, I thought that was a good way to frame it. Star again, conducted between 1985 and 1989, randomized grade kindergarten students at participating public schools and do one of three class types, small, regular, and regular with a teacher's aid evaluations of STAR showed significantly higher scores for students attending the small class type leading to states. If you recall, Tennessee and Colorado, California adopted these policies, reducing class sizes didn't live up to the hype.

(17:05):

It's partly because California especially hired a lot of unqualified teachers to meet the new demands. What else could they do? So these economists go into great detail about the issues with the original methodology. I'm going to try to simplify it, which is not easy. This thing is long. I think it boiled down to this two stage lease squares model was poorly aligned with the experimental design because it treated the class types as the same as class size and class type and class size are not the same things because the school principals had discretion in choosing the target class sizes for each type. So there were substantial differences in compliance with different schools focusing on both heterogeneous sizes of classes, meaning that the dose of the intervention differed and hetero heterogeneous class size reductions. So the amount of the reduction also differed between the treatment and the control arms.

(18:10):

So their model here they go identified a weighted average of school specific effects, but those weights depended on each school's compliance with the demands of the experimental design, which, and they didn't follow that, which I'm going to tell you about that in a minute. So all that was endogenous with the class size effectiveness model that they were using because certain types of schools were more or less compliant with the experimental design, which first we're hearing about that, or at least first I'm hearing about it. So what they did instead was they dug into the details of the program design and implementation at each school, and they found that schools actually under complied with the intended implementation of STAR by having class size reductions that were smaller. So the experimental design called for 13 to 17 student range for the treatment and 22 to 25 kids for the control.

(19:14):

So the average reduction in class size between the control and treatment was seven students. But again, it varied a lot across the schools. They had between zero and 12. So okay, that's a pretty big range and doesn't exactly meet the qualifications of the design. And then strangely enough, the schools that had the largest impact, the effects, they could be positive or negative, they showed the lowest compliance with reducing class sizes. So they didn't have the bigger reductions. Alright. Their analysis, I hope we can get through this, they did something called group random effects approach. So they're trying to fix all this stuff. So they're allowing, this allows 'em to model the class size levels for both the treatment and the control class types because again, there was a lot of discretion and they found that nearly all of the gains from reducing class size in the tar star study were driven by 29% of the schools in the sample.

(20:19):

In fact, if the 29% of those highly sensitive schools had been omitted from the experiment, their model would've failed to detect any causal effect of class size on test scores. So then they dig into this set of schools, their 23. So these 23 schools, which they call group two, their mean test score increase for those kids caused by reducing class size by one student equated to an improvement of 0.09 standard deviation. But when you average all across the groups and students, that same figure is 0.02 standard deviation. So it gets, it's a lot higher when you're in this higher impact group. A little more about group two, they had larger under compliance, which means they didn't, in my mind, they didn't reduce class sizes as much as attended. So they had bigger classes in general. And the students in group two also attended school between four and five days fewer.

(21:27):

They had not as long as the school year compared to the other two groups. And then they close, they've got this long discussion on, we really need to think about how we scale these interventions that, and we really need to look into the effects by subgroups of schools and think about targeting our resources and policies where they're most likely to have the largest effects. Because monitoring implementation is a hard thing to do across so many schools and so on and so forth. So another scaling problem, but also some important information I think on this study.

Michael Petrilli (22:08):

Wow. Isn't it the case that there were other studies that used the same data to answer other questions, not just class size. I feel like maybe about teacher effectiveness or other things and makes me just wonder about how many other findings now do we need to question whether they might not be in fact true.

Amber Northern (22:30):

Yeah. And I'm sure they probably got into that in this very long background section.

Michael Petrilli (22:38):

Well, and look, and this is not about ancient history here. I mean, we know New York City is going through this right now because unfortunately they were able to get this class size policy through recently that's going to force them to go through and lower class sizes in every school, including in the affluent schools. And a lot of us are worried that we're going to see, again, that's going to lead teachers to transfer from the high poverty schools to the more affluent ones. The high poverty schools are going to have lower quality teachers, and then we're not going to get the results that we want. So I thought you were going to just tell us that they were able to tease out sort of what happens when you scale up. And of course there's variation, but it seems like it's even more fundamental than that, that the actual study had some serious problems and that we need to be much more realistic about what class size reduction can achieve based on that, even if scaled up effectively, even without all of the problems with say, lower quality teachers.

Amber Northern (23:38):

And other folks have pointed out, it was just one in five schools that agreed to participate. And so you would think if you agreed to participate, then you would play by the rules. But there was just a lot of flexibility in how these interventions were carried out.

David Griffith (23:59):

Yeah, it's troubling. I mean, it's not that troubling if you're skeptical of class size reductions, but it is troubling. I guess the fundamental problem, as I understood it, was that the schools that implemented it most diligently saw essentially no effect. And the schools that implemented it sort of halfheartedly saw bigger effects. And that was sort of inexplicable to the researchers.

Amber Northern (24:27):

That's right. Yeah. I mean, if they had larger under compliance, I'd have think that through, right? That means they had the bigger class sizes and they had the bigger impact. Right. Am I thinking about that right, David?

Michael Petrilli (24:42):

Or the difference again, the difference. They may be all, it could have been that they had small class sizes throughout right? Or big class sizes throughout. It was just that the differences weren't that large.

David Griffith (24:53):

Yeah. As I said, what you were saying, it was that the differences were between the two were smaller in the places where there were bigger effects. Was that what you were saying?

Amber Northern (25:02):

The differences between the Right. The group two had the bigger class sizes because they under complied more. And the other schools that I guess didn't have as high level of under compliance didn't have the effects. It can kind of make your head hurt when you think about it, but I think I'm thinking about it, right?

Michael Petrilli (25:26):

It's the kind of logic puzzle you might find on the lsat. So again, back to law school idea,

Amber Northern (25:33):

There's some problems with the study people, and we probably need not put it on such a pedestal. I think that's my takeaway and that, hey, it gets down to a very plain fact about how hard it is to scale anything, even if it was a good intervention.

Michael Petrilli (25:53):

Yep. No, I think that's right. I think that as research has improved, it does mean that it's a higher bar for some of those old studies back in the day. Alright, well we'll need to let that be that, because that is all the time we've got for this week. So until next week,

Amber Northern (26:13):

I'm Amber Northern.

Michael Petrilli (26:14):

And I'm Mike Petrilli at the Thomas B. Fordham Institute signing off.

Cheers and Jeers: April 25, 2024

Cheers

- “College students should study more.” —Matthew Yglesias, Slow Boring

- Students persuade Kevin Bacon to return to the Utah high school where Footloose was filmed. —NPR

- A fiscal cliff is looming, but there are practical steps that schools can take now to help them prepare for budgetary shortfalls. —Chad Aldeman, The 74

Jeers

- “Schools want to ban phones. Parents say no.” —Wall Street Journal

- If a new bill is approved, California will eliminate its last remaining teacher assessment. —EdSource

What we're reading this week: April 25, 2024

- A new survey of American teenagers reveals interesting opinions and trends in cellphone and social media use, bullying in schools, and absenteeism. —EdChoice

- Vying and jockeying for Trump’s secretary of education have begun. —Daily Caller

- “Tennessee’s universal school voucher plan is dead for now, governor acknowledges.” —Chalkbeat

- As Ivy League and prestigious, private universities lose clout and raise tuition, public state schools provide a better alternative. —Nate Silver, Silver Bulletin

- Biden’s new Title IX regulations extend protections to transgender students and change how campuses can adjudicate sexual assault allegations. —Washington Post

Gadfly Archive

The Education Gadfly Weekly: Next, curtail the Chromebooks

The Education Gadfly Weekly: Doing educational equity wrong

The Education Gadfly Weekly: School choice need not mean an expensive windfall for the rich

The Education Gladfly Weekly: Introducing Education Policy Research Barbie

The Education Gadfly Weekly: Our schools have lost their sense of purpose

The Education Gadfly Weekly: Toward a more research-informed charter school application process

The Education Gadfly Weekly: The “no excuses” model is due for a renaissance

The Education Gadfly Weekly: Doing educational equity right: Grading

The Education Gadfly Weekly: Doing educational equity right: The homework gap

The Education Gadfly Weekly: On teacher housing, is the juice worth the squeeze?

The Education Gadfly Weekly: Doing educational equity right – School closures